Anthropic's Claude AI: Web Search Powered by Multi-Agent Systems

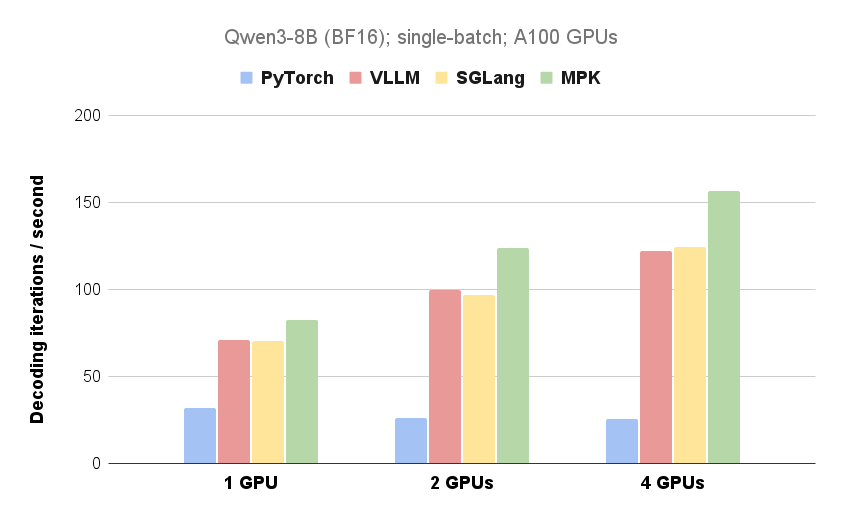

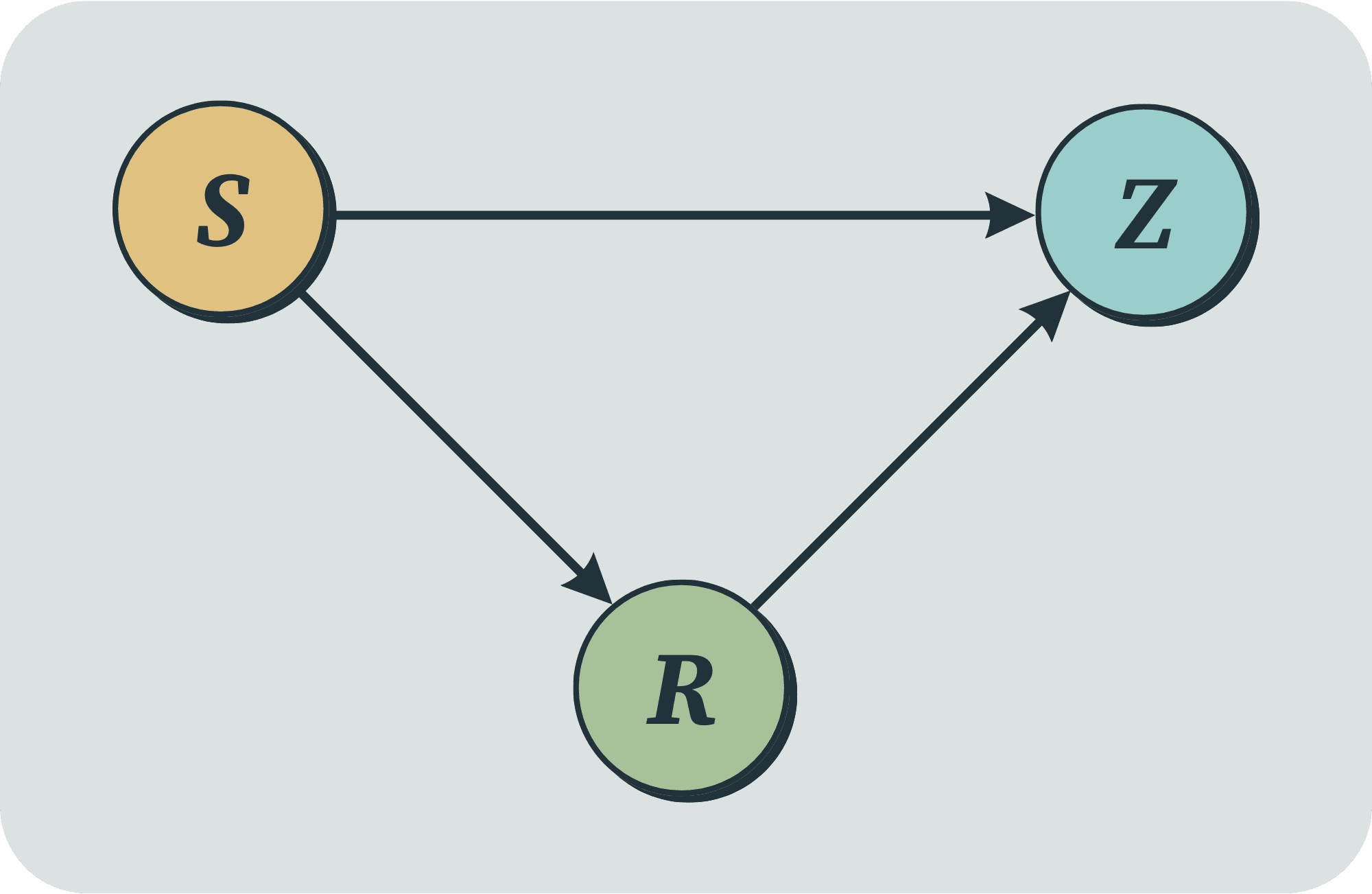

Anthropic has introduced a new Research capability to its large language model, Claude. This feature leverages a multi-agent system to search across the web, Google Workspace, and any integrations to accomplish complex tasks. The post details the system's architecture, tool design, and prompt engineering, highlighting how multi-agent collaboration, parallel search, and dynamic information retrieval enhance search efficiency. While multi-agent systems consume more tokens, they significantly outperform single-agent systems on tasks requiring broad search and parallel processing. The system excels in internal evaluations, particularly breadth-first queries involving simultaneous exploration of multiple directions.