The Ethical Quandary of LLMs: Why I've Stopped Using Them

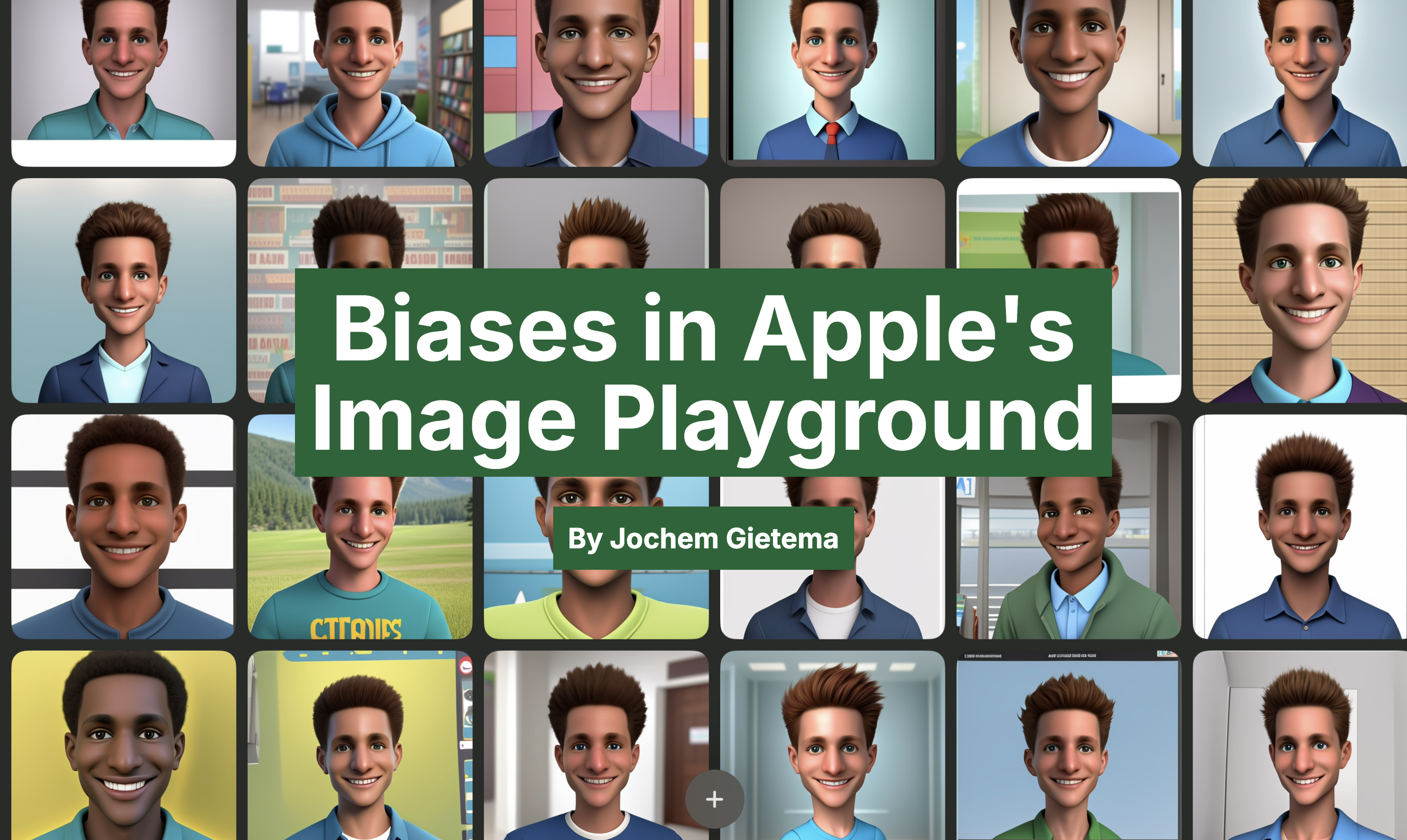

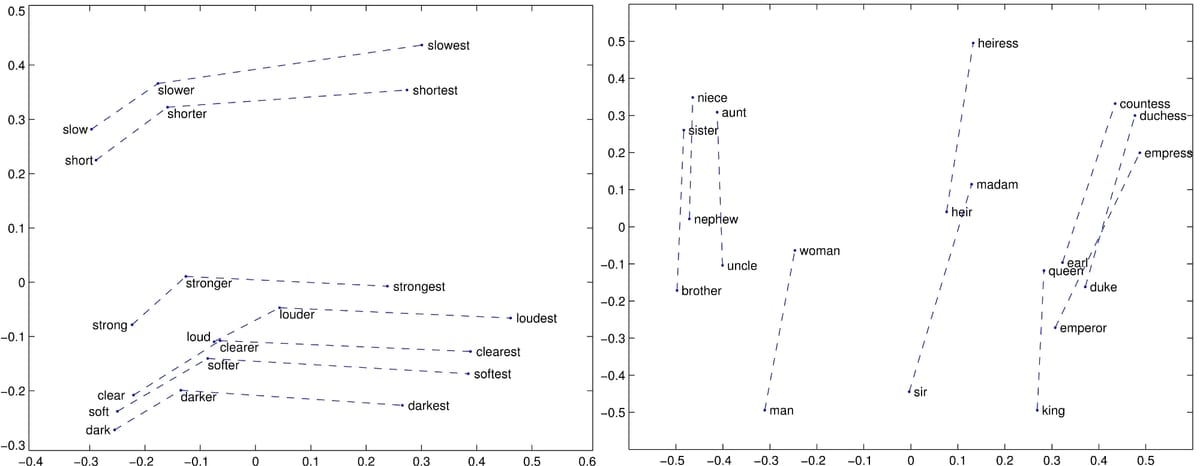

This post delves into the ethical concerns surrounding Large Language Models (LLMs) and explains the author's decision to stop using them. The author explores five key issues: energy consumption, training data sourcing, job displacement, inaccurate information and bias, and concentration of power. High energy usage, privacy concerns related to training data, the potential for job displacement, the risk of misinformation due to biases and inaccuracies, and the concentration of power in the hands of a few large tech companies are highlighted as significant ethical problems. The author argues that using LLMs without actively addressing these ethical concerns is unethical.