GPT-5: A Deep Dive into Pricing, Model Card, and Key Features

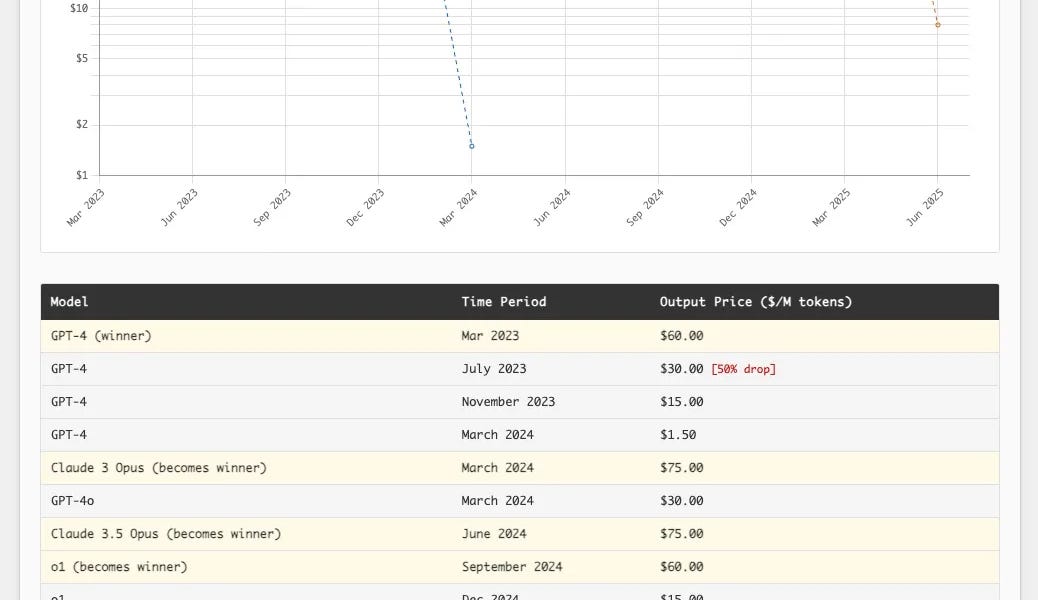

OpenAI's GPT-5 family has arrived! It's not a revolutionary leap, but it significantly outperforms its predecessors in reliability and usability. In ChatGPT, GPT-5 is a hybrid system intelligently switching between models based on problem difficulty; the API version offers regular, mini, and nano models with four reasoning levels. It boasts a 272,000-token input limit and a 128,000-token output limit, supporting text and image input, but only text output. Pricing is aggressively competitive, significantly undercutting rivals. Furthermore, GPT-5 shows marked improvements in reducing hallucinations, better instruction following, and minimizing sycophancy, employing a novel safety training approach. It excels in writing, coding, and healthcare. However, prompt injection remains an unsolved challenge.

![Open-Source Image Model FLUX.1-Krea [dev]: Breaking Free from the 'AI Look'](https://www.krea.ai//_app/77be82153701096c/immutable/assets/thumbnail.DSqx6EeB.webp)