Embedding Dimensions: From 300 to 4096, and Beyond

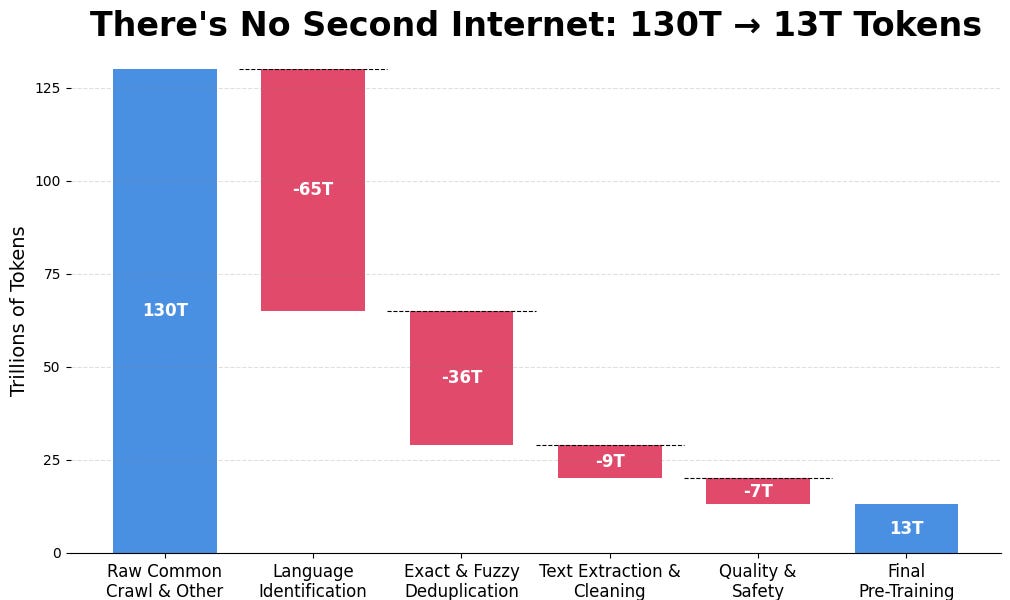

A few years ago, 200-300 dimensional embeddings were common. However, with the rise of deep learning models like BERT and GPT, and advancements in GPU computing, embedding dimensionality has exploded. We've seen a progression from BERT's 768 dimensions to GPT-3's 1536 and now models with 4096 dimensions or more. This is driven by architectural changes (Transformers), larger training datasets, the rise of platforms like Hugging Face, and advancements in vector databases. While increased dimensionality offers performance gains, it also introduces storage and inference challenges. Recent research explores more efficient embedding representations, such as Matryoshka learning, aiming for a better balance between performance and efficiency.